You've probably heard it a million times – data is everywhere. But in 2026, that's not just a buzzword. It's a reality you're living every day. Whether you're in healthcare, insurance, banking, or any other data-heavy sector, the challenge isn't collecting information – it's making sense of it. That's where data science steps in.

At Binariks, we work with organizations like yours to turn raw data into real business outcomes. In this article, we'll walk you through the top data science trends in 2026 across industries – what's emerging, what's gaining momentum, and how forward-thinking companies are applying these advances in data science technology to make smarter, faster decisions. You'll get a clear picture of what's next – and how to stay ahead.

Will adopting GenAI mark the next chapter for your business?

Download our free whitepaper now to find out.

Data science technology growth

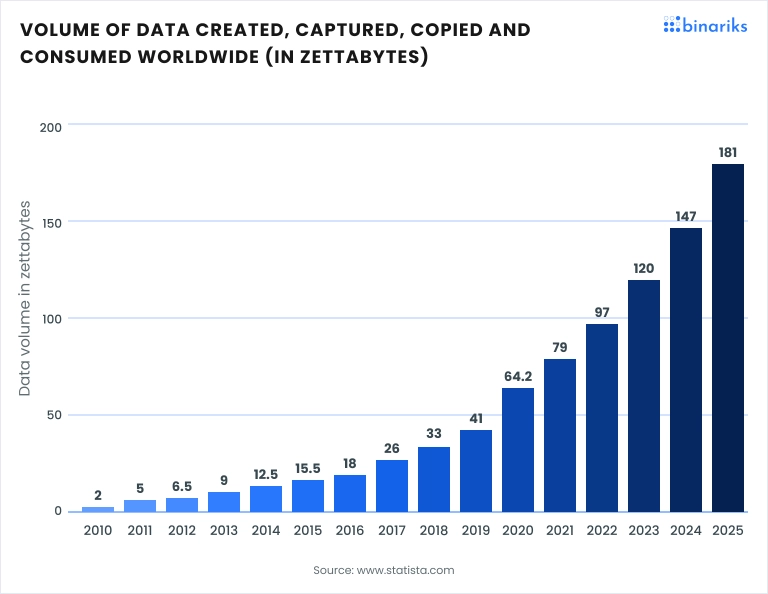

In 2026, the data landscape is expanding at an unprecedented rate. With 149 zettabytes having been created, captured, and consumed as of 2024, the global volume of data reached 181 zettabytes by the end of 2025. This underscores the critical need for advanced data science solutions to manage and derive meaningful insights from this vast information pool.

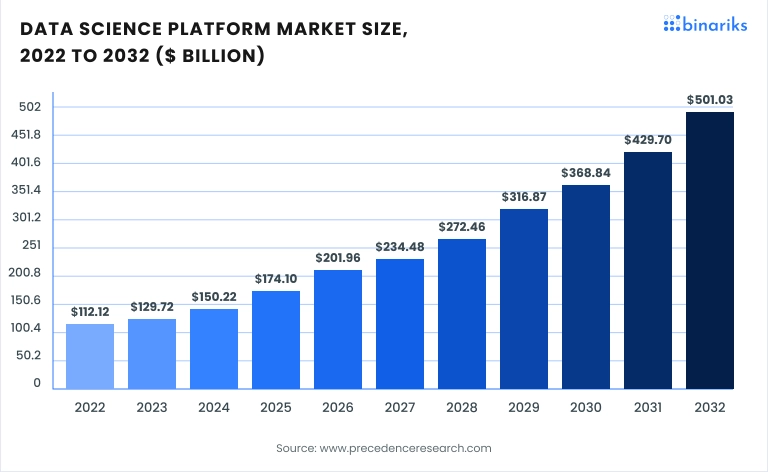

The data science platform market is responding robustly to this demand. Valued at 5.15 billion in 2025, it's expected to grow to $676.51 billion by 2034, reflecting a CAGR of 16.2%.

- This growth is mirrored in organizational investments. Approximately 55% of companies claimed to increase their AI data science budgets by 25% or more in 2024, highlighting a strategic shift towards data-driven decision-making.

- The adoption of big data analytics is widespread across various sectors. For instance, 56% of healthcare centers have adopted predictive analysis , with higher rates in some countries like Singapore (92%).

- However, challenges persist. 59% of professionals identify a lack of data science expertise as a primary barrier to fully leveraging AI's potential. This skills gap emphasizes the importance of investing in talent development alongside technological advancements.

9 current trends in data science for 2026

Now, let's move to the top trending topics defining data science in 2026 and the years to come. The nine recent trends in data science that Binariks team has carefully selected to be represented in this article are based on the current state of the market, the landscape of evolving digital technologies, and the demands of consumers.

1. TinyML

TinyML refers to implementing machine learning models on tiny, low-power devices like microcontrollers, sensors, and IoT devices, bringing inference directly to the edge, without any cloud dependency.

But in 2026, TinyML has moved well beyond a research curiosity. It's becoming a production-grade approach for industries where latency, bandwidth costs, or connectivity constraints make cloud-based ML impractical.

The core idea is simple but powerful: instead of sending raw sensor data to a server for processing, the device itself runs a lightweight model and returns only the result. A vibration sensor on a factory floor doesn't stream gigabytes of data – it runs a model locally, detects an anomaly, and sends a single alert. This reduces bandwidth consumption by orders of magnitude and enables real-time decisions in environments where a 200ms round-trip to the cloud is simply not acceptable.

What's changed in 2026 is tooling maturity. Frameworks like TensorFlow Lite, Edge Impulse, and Arduino ML have made it significantly easier to train, compress, and deploy models on hardware with as little as 256KB of RAM. Quantization and pruning techniques now allow models that once required a GPU to run on a microcontroller drawing under 1 milliwatt.

The use cases are expanding fast. In industrial maintenance, embedded models detect bearing failures from vibration signatures before they escalate. In healthcare, wearables like the Apple Watch and Fitbit already run on-device models for arrhythmia detection and fall recognition – without sending raw biometric data to a server. For organizations operating in remote environments, regulated industries, or cost-sensitive IoT deployments, TinyML is becoming the default architectural choice.

2. Predictive analytics

Predictive analytics is not a new concept, but what's changed in 2026 is the scale, speed, and accessibility at which it operates.

Where predictive models once required weeks of data preparation and specialist involvement, modern platforms can surface actionable forecasts in near real-time – embedded directly into operational workflows rather than sitting in a separate analytics layer.

The underlying shift is infrastructure. The combination of cloud-scale data warehouses, streaming data pipelines, and increasingly automated feature engineering has collapsed the distance between raw data and a deployed prediction. Organizations no longer need to batch-process last month's transactions to forecast next quarter's churn – they can act on signals as they emerge. In regulated industries, this is particularly consequential.

In healthcare, predictive models now flag patients at risk of hospital readmission within 30 days, enabling proactive outreach before a crisis develops. Insurers use real-time risk scoring to adjust underwriting decisions dynamically, rather than relying on static actuarial tables. In fintech, fraud detection models evaluate transaction risk in under 50 milliseconds, making a decision before the payment clears.

3. AutoML

Automated machine learning has been discussed for years as the technology that would democratize data science. In 2026, that promise is finally being realized – not because AutoML has replaced data scientists, but because it has fundamentally changed what data scientists spend their time on.

The traditional ML workflow is labor-intensive: data cleaning, feature engineering, model selection, hyperparameter tuning, validation – each step requiring specialized expertise and significant iteration time. AutoML platforms automate most of this pipeline, compressing weeks of work into hours.

The data scientist's role shifts from execution to oversight: defining the problem correctly, evaluating outputs critically, and ensuring the resulting model is explainable and fit for production. Tools like Google AutoML, Azure Automated ML, and open-source platforms like Auto-Sklearn and TPOT now handle end-to-end pipeline construction with minimal human intervention. More significantly, they're becoming embedded in enterprise data platforms, meaning a business analyst working in Salesforce or SAP can trigger a predictive model build directly from their workflow, without writing a single line of code.

For organizations in healthcare, insurance, and fintech, AutoML is particularly valuable for use cases that are high in volume but moderate in complexity – credit risk scoring, claims triage, patient readmission prediction. These are problems where the value of a good model is clear, the data is relatively structured, and the cost of maintaining a bespoke hand-built model is hard to justify.

4. Cloud migration

In 2026, no tool for data storage is more scalable, flexible, and cost-effective than the cloud. Surprisingly, data migration is also quite budget-friendly, as there is no need to invest in additional physical infrastructure.

Therefore, approximately 44% of traditional small businesses utilize cloud infrastructure or hosting services. In contrast, this adoption is higher among small tech companies, with 66% leveraging these services. Enterprises show the highest adoption rate at 74%, and the numbers are only expected to grow.

Right now, the cloud migration market is one of the data science trends that is impossible not to notice. It is currently worth USD 232.51 billion and is projected to grow at a (CAGR) of 28.24% and reach 806.41 billion by 2029.

For data-heavy organizations, the migration decision increasingly centers on architecture rather than platform. Lift-and-shift migrations – moving existing workloads to cloud VMs without redesigning them – consistently underdeliver. The organizations extracting the most value from cloud migration are those that use the transition as an opportunity to re-architect around cloud-native data patterns: event streaming, object storage, serverless compute, and managed pipeline orchestration.

The compliance dimension remains the most complex variable. As for cloud migration in healthcare , organizations must navigate HIPAA data residency requirements. Financial institutions face SOC 2 and PCI DSS constraints. The cloud providers have responded with dedicated compliance frameworks – AWS GovCloud, Azure Government, Google Cloud's Assured Workloads – but navigating these correctly requires expertise that goes beyond standard cloud engineering.

ABM platform for global manufacturers

We've optimized the app and ensured its migration to the cloud

5. Cloud-native

Cloud-native describes building software designed from the ground up for cloud environments – microservices, containers, declarative infrastructure, and continuous delivery. In 2026, these patterns have become the default for new data platform development, not because they are fashionable, but because the operational economics are compelling.

A cloud-native data pipeline scales horizontally under load, recovers automatically from failures, and updates without downtime. For organizations processing event-driven data – transactions, IoT feeds, real-time clinical data – this architecture is effectively non-negotiable.

Managed services like AWS EKS, Azure AKS, and orchestration platforms like Airflow and Prefect have reduced the operational burden significantly, making cloud-native accessible beyond large enterprise engineering teams. The transition is not trivial, but organizations that have completed it consistently report faster iteration cycles and meaningfully better ability to adopt new AI capabilities as they emerge.

6. Augmented consumer interface

Augmented consumer interfaces adapt in real time based on behavioral signals, context, and predictive models, rather than waiting for explicit user input. In 2026, converging technologies have made this commercially viable at scale: LLMs handle complex conversational queries, computer vision enables AR experiences, and real-time personalization engines update interface behavior within milliseconds.

The most advanced implementations are moving toward "zero-UI" – systems that infer user needs rather than ask for them. A clinical dashboard that surfaces the three most relevant patient flags at shift start. A banking app that offers a product at the exact moment spending patterns suggest a need.

In regulated industries, the design challenge is balancing personalization capability with transparency and user control – interfaces that adapt too aggressively risk manipulating behavior in ways that create regulatory and reputational exposure.

7. Data regulation

In 2026, there is just so much data online that protecting data privacy is the top priority for every business, whatever it might be. This is especially true for data-sensitive domains like healthcare and insurance.

There are several new data regulation acts for new companies to watch for in 2026, including:

- State privacy laws in the USA in states including Montana Consumer Data Privacy Act, Florida Digital Bill of Rights, Texas Data Privacy and Security Act, Oregon Consumer Privacy Act, and Delaware Personal Data Privacy Act.

- In 2024, Canada introduced the Consumer Privacy Protection Act (CPPA), the Personal Information and Data Protection Tribunal Act, and the Artificial Intelligence and Data Act (AIDA). You can expect enhanced individual control over personal data and more substantial penalties for non-compliance from these acts.

- In the EU, an ePrivacy Regulation (ePR) finalized in 2024 establishes regulations on cookie usage and apps like WhatsApp and Facebook Messenger.

- AI regulation entered a pivotal phase in 2024 with the long-awaited AI Act, which will be fully applicable on August 2, 2026 , and is a general EU legislation that adopts a category-based approach to different types of artificial intelligence.

- Digital Services Act (DSA) is an upcoming EU regulation that defines legal and harmful content that can be removed from digital platforms.

Naturally, new legislative acts will persuade businesses to audit their current processes in alignment with the new legislation.

8. AI as a Service (AIaaS)

AIaaS has matured from an experimental option into a mainstream delivery model. Organizations now access foundation models – OpenAI GPT-4, Google Gemini, Anthropic Claude – through APIs, with usage-based pricing and no infrastructure to maintain.

For mid-market companies in healthcare, insurance, and fintech, this has effectively eliminated the barrier to entry for sophisticated AI applications. A digital health startup can integrate a clinically-tuned LLM for patient triage without hiring ML engineers. An insurer can deploy a claims summarization tool in weeks rather than quarters.

The strategic question AIaaS raises is differentiation. If competitors access the same models at the same price, advantage shifts to data quality, domain specificity, and implementation effectiveness. Organizations that treat AIaaS as plug-and-play without investing in fine-tuning, retrieval augmentation, or proprietary data assets will find the competitive gap narrowing rather than widening.

9. Python's increasing role

Python's dominance in data science rests on ecosystem depth – NumPy, Pandas, PyTorch, Hugging Face, LangChain – libraries covering every stage of the ML lifecycle, with new model implementations typically arriving within days of a research release.

In 2026, its reach has expanded further: FastAPI for production APIs, Streamlit and Gradio for rapid application deployment, and Polars and Prefect pushing into data engineering territory previously held by Java and Scala.

For practitioners, Python fluency is table stakes but as AI-assisted code generation matures, writing Python is becoming less of a differentiator. What commands the highest value now is knowing what to build and why: domain expertise, model evaluation judgment, and the ability to communicate outputs to non-technical stakeholders.

For organizations, the risk is dependency on a fast-moving open-source ecosystem. Python-based ML systems in production without a robust MLOps practice accumulate technical debt that eventually demands serious attention.

Lift your business to new heights with Binariks' AI, ML, and Data Science services

What do experts say about new data science technologies?

In recent years, data science has undergone a dramatic shift. What was once a siloed analytical practice is now deeply embedded in enterprise transformation.

The rise of AI, especially generative and agentic systems, has pushed data science into the spotlight like never before. But with that attention comes confusion. Where exactly is this field heading? And how should organizations – and data scientists themselves – adapt?

To understand where data science is going in 2026 and beyond, it's essential to hear from those shaping its direction. Here's what respected thought leaders say about the recent developments in data science – and what it means for your organization.

From tools to autonomous agents

We're no longer just building tools. We're building agents – intelligent systems capable of acting autonomously and collaborating with other systems to achieve goals. This shift, often referred to as Agentic AI, is one of the most talked-about trends among technology leaders.

As Thomas H. Davenport , a renowned analytics professor, and Randy Bean , a data leadership adviser, discuss the emergence of agentic AI in their article:

"Agentic AI seems to be on an inevitable rise: Everybody in the tech vendor and analyst worlds is excited about the prospect of having AI programs collaborate to do real work instead of just generating content, even though nobody is entirely sure how it will all work."

The excitement is palpable, but so is the uncertainty. There's no shortage of powerful models. What's missing, for many, is a roadmap for how to apply them in ways that truly drive business outcomes. And that's the paradox: the more advanced AI becomes, the more it exposes the underlying complexity of the data science ecosystem.

AI is not a shortcut – it's a system

While many hoped AI would offer a shortcut to better results, it's becoming clear that AI isn't a shortcut – it's a system. A powerful one, yes, but only as effective as the infrastructure, processes, and data it's built on.

This is where the real shift is happening. As Bernard Marr , world-renowned futurist, board advisor, and author, explains:

"The focus will shift from model optimization to data quality as organizations recognize that better data, not just better algorithms, is the key to superior AI performance."

The implications are significant. Organizations leading the AI race are those investing in data governance, operational integration, and cross-functional alignment, not just in hiring more data scientists or experimenting with the latest LLM APIs.

That brings us to the human question: What happens to the data scientist in this evolving landscape?

So, what happens to the data scientist?

As AI takes over many once-core tasks – data visualization, predictive modeling, even code generation – some fear the data scientist role is becoming obsolete. But automation doesn't mean elimination. The role is being reshaped, not erased. The real value is shifting away from execution and toward insight, oversight, and integration.

Chris Mattmann , Chief Technology and Innovation Officer at NASA's Jet Propulsion Laboratory, speaks candidly about this transition:

"Data analysis will be replaced in the next five to 10 years, done better by AI than humans. But training new AI and refining data will still have a big place(...).

AI is data-hungry for fuel, and understanding math, statistics, and how to evaluate data science and AI will be much more important than building it."

His message is clear: the future of data science belongs not to those who simply build models, but to those who understand where and why to apply them. Domain expertise, ethical reasoning, and contextual storytelling are emerging as the most irreplaceable skills. As Mattmann emphasizes, it's also about building supportive networks and adapting to AI's new demands with strategic focus and scientific discipline.

As AI systems grow more autonomous and the ecosystem becomes more complex, data science evolves into a cross-functional discipline tightly integrated with business strategy. The next frontier is smarter systems, built with purpose, refined by data, and guided by context.

At Binariks, we recognize this shift. Our Center of Excellence unites engineers, data scientists, solution architects, and domain experts to transform new data technology into scalable, business-aligned solutions. It's not just about staying current. It's about staying ahead.

What data science trends will be widespread across industries?

Aside from the data science future trends that will undeniably rule most industries, some trends are more industry-specific than those due to their specific benefits. Let's focus on the benefits for the domains in which Binariks has immaculate experience.

Medtech

In medicine, the most critical aspect is to make professionals benefit from the technology and make it a tool that assists them in decision-making and makes everything more accurate and fast. However, this is a hanging balance for stakeholders to maintain, as doctors and caretakers should not over-rely on technology.

1. Data democratization is one of the emerging trends in data science that caters explicitly to medical technology simply because medical establishments have medical and non-medical staff who must be educated about technological advancements for everything to work. Knowledgeable doctors and nurses enhance patient care through informed decision-making.

Example: Large frontrunner companies like Philips and Siemens Healthineers use data science to improve diagnostic tools and patient care. Third-party companies like Tata Consultancy Services (TCS) assist medical companies in making healthcare data accessible.

2. Explainable artificial intelligence (XAI) is a type of AI in which humans get to keep intellectual oversight over their output. Unlike a traditional AI, XAI helps pinpoint where and how a model might go wrong or where biases exist. In MedTech, these types of AI can and will assist in treatment and decision-planning. More effective time spent on diagnosing means more time for actual treatment and room for patient satisfaction and better outcomes.

Example: IBM Watson Health uses XAI in the decision-making process.

Insurtech

Insurance as a sector moves towards faster detection of issues and automatizing some basic human interactions so that professionals can focus on more comprehensive tasks.

1. Data unification. Consolidating data from various sources helps insurance companies assess risk and process claims better. It is also a step towards reconciliation.

Example: Companies like Progressive and Allstate use data unification for personalized insurance premiums and fraud detection.

2. Graph analytics are used to detect fraud patterns and understand customer networks to tailor insurance products.

Example: Large financial institutions used graph analytics for fraud detection and risk assessment.

3. Large language models (LLMs) transform customer service and claims processing by automating interactions and analyzing customer feedback more effectively. They can also help with fraud detection and risk assessment.

Example: Most large banks now use large language models, including JPMorgan Chase and Bank of America.

Fintech

The latest trends in data science mainly focus on processing large amounts of data.

1. Data-driven consumer experience. Banks increasingly use AI to personalize banking experiences. For instance, they recommend financial products or advise on investments .

Example: Banks like Wells Fargo and Bank of America use data-driven consumer experience in their expertise.

2. Adversarial machine learning (AML) is a relatively new field in AI that focuses on the security aspects of machine learning systems. This is especially useful in areas like fraud detection and algorithmic trading.

Example: JPMorgan Chase employs adversarial machine learning to safeguard its AI systems.

3. Data fabric is one of the data analytics trends that is an architecture and set of services that provide consistent data management across various environments. Managing and analyzing large, complex datasets is vital for banks to gain real-time insights for better decision-making and risk management.

Example: Large banks like Citibank or HSBC use data from different sources and integrate it into a cohesive platform. Such data includes transaction records, customer interactions, and market analytics.

Conclusion

As data volumes continue to grow and AI becomes deeply integrated into core operations, it's clear that innovations in data science are no longer optional – they're foundational to business success. The data science technology trends mentioned in this article, from agentic AI to explainable models and scalable cloud-native solutions, are already transforming how organizations make decisions, serve customers, and stay competitive.

Yet adopting these technologies requires more than technical execution. It takes strategic focus, domain expertise, and the ability to translate data into real business outcomes.

At Binariks, we bring this holistic approach to every engagement, supporting you across the full lifecycle – from business analysis and AI solution planning to data preparation, ML modeling, and integration. We also ensure quality assurance and ongoing support, so your AI initiatives deliver measurable impact with clarity, speed, and confidence.

Our AI Center of Excellence unites engineers, data scientists, solution architects, and industry experts to turn emerging technologies into scalable, secure, and business-aligned solutions.

The future of data isn't just big—it's smart. Let's build it together.

Share